来源:

联合国大会通过里程碑式决议,呼吁让人工智能给人类带来“惠益”

联合国大会今天未经表决一致通过了一项具有里程碑意义的决议,呼吁抓住“安全、可靠和值得信赖的”人工智能系统带来的机遇,让人工智能给人类带来“惠益”,并以此促进可持续发展。

来源:

联合国新闻/人工智能 2024/3/21

《人工智能法案》已经完成。以下是将会(和不会)改变的内容

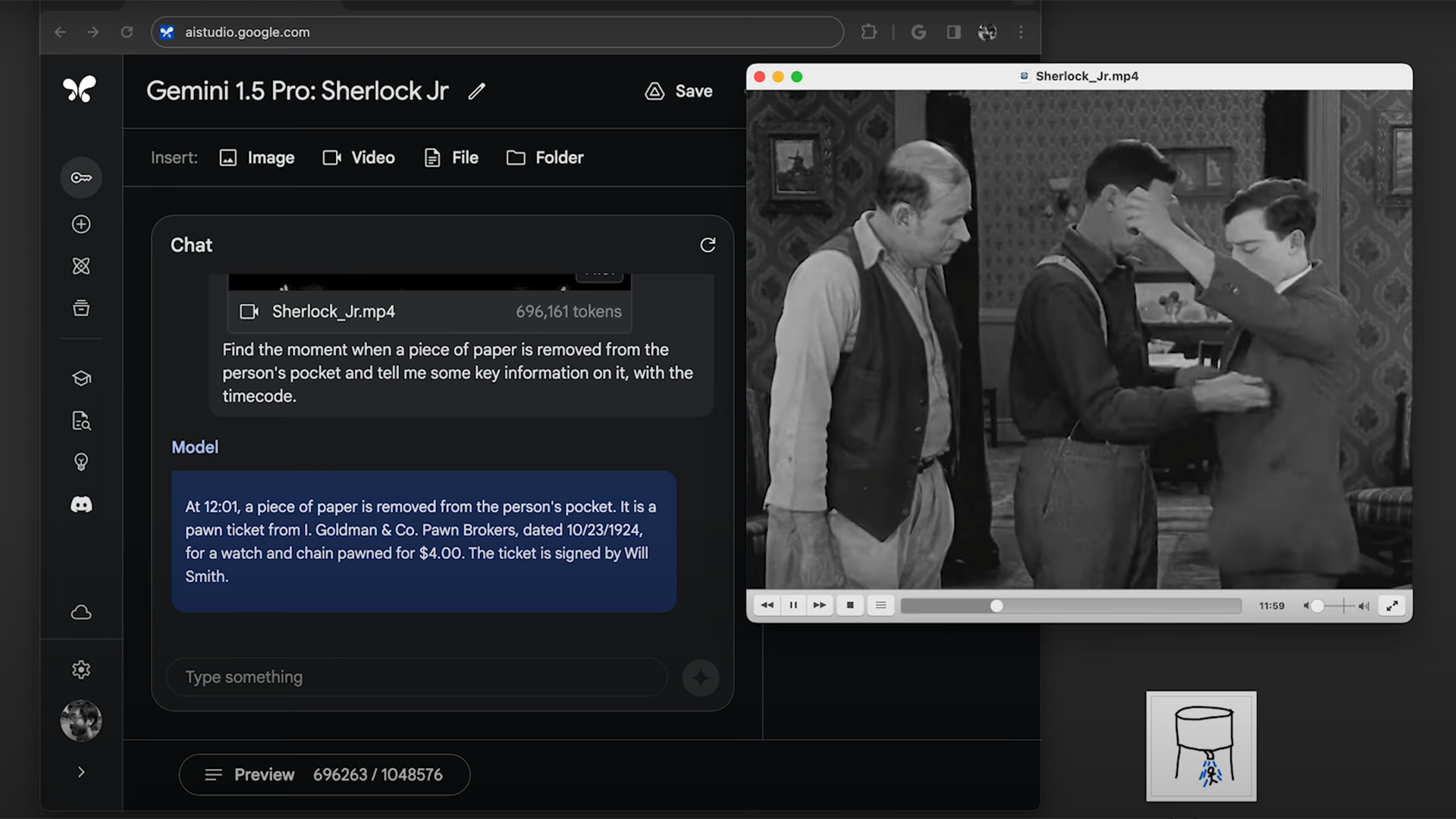

Google DeepMind’s new agent could tackle a variety of games it had never seen before by watching human players.

来源:

MIT Technology Review 2024/3/15

这家自动驾驶初创公司正在使用生成式人工智能来预测交通

Waabi 表示,其新模型可以利用激光雷达数据预测行人、卡车和骑自行车者的移动方式。

来源:

MIT Technology Review 2024/3/15

全球新闻合作伙伴:Le Monde 和 Prisa Media

我们与国际新闻机构 Le Monde 和 Prisa Media 合作,将法语和西班牙语新闻内容引入 ChatGPT。

来源:

OpenAi Blog 2024/3/13

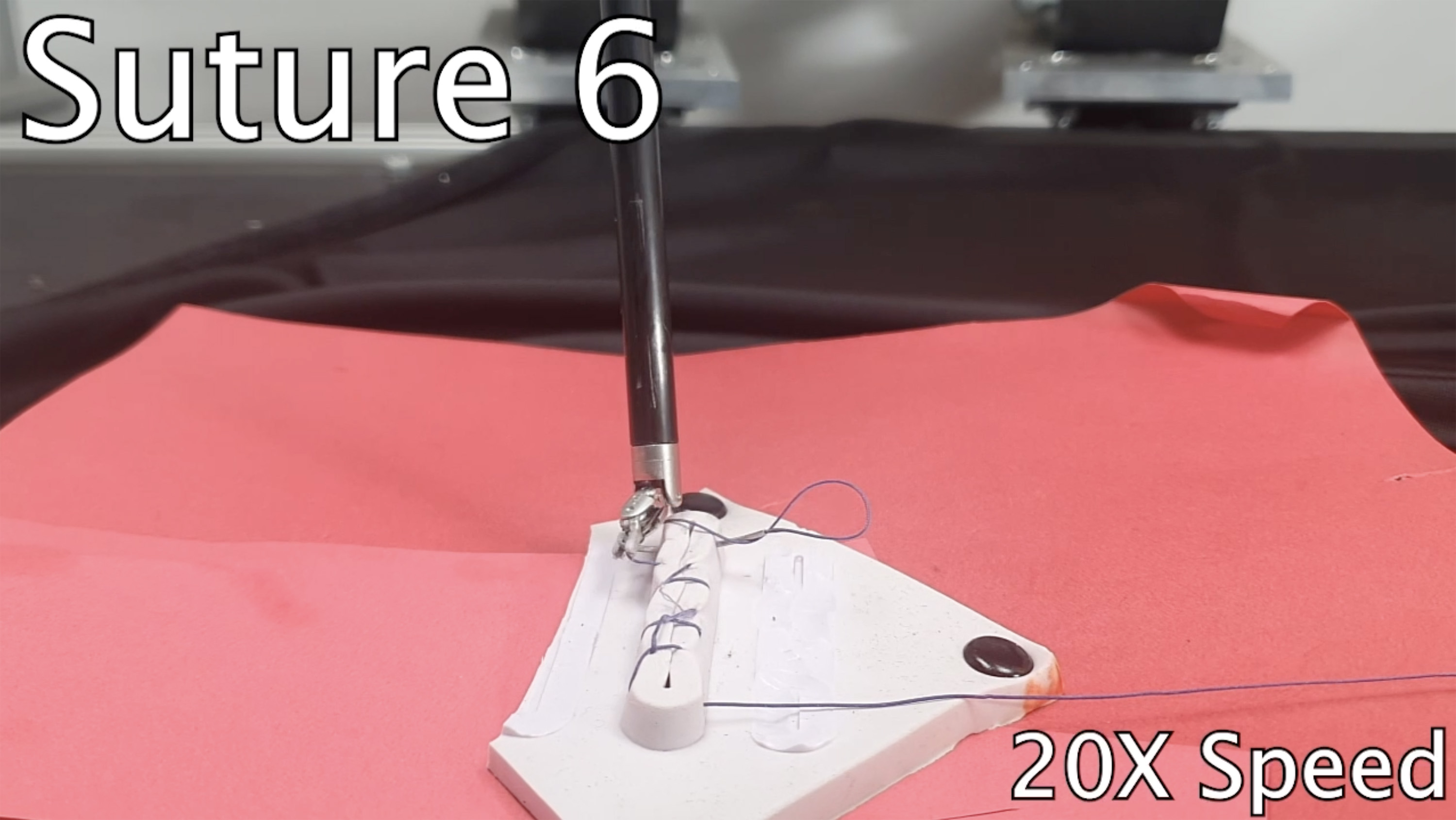

An OpenAI spinoff has built an AI model that helps robots learn tasks like humans

But can it graduate from the lab to the warehouse floor?

来源:

MIT Technology Review 2024/3/8

OpenAI 宣布董事会新成员

Sue Desmond-Hellmann 博士、Nicole Seligman、Fidji Simo 加入;萨姆·奥尔特曼重新加入董事会

来源:

OpenAi Blog 2024/3/8

教科文组织报告敲响警钟:生成式人工智能加剧性别偏见

国际妇女节前夕,联合国教科文组织发布研究报告,揭示了令人担忧的事实:大型语言模型(LLM)存在性别偏见、恐同和种族刻板印象倾向。

来源:

联合国新闻/人工智能 2024/3/7

大型语言模型可以做出令人瞠目结舌的事情。但没有人确切知道原因。

这是一个问题。弄清楚它是我们这个时代最大的科学难题之一,也是控制更强大的未来模型的关键一步。

来源:

MIT Technology Review 2024/3/4

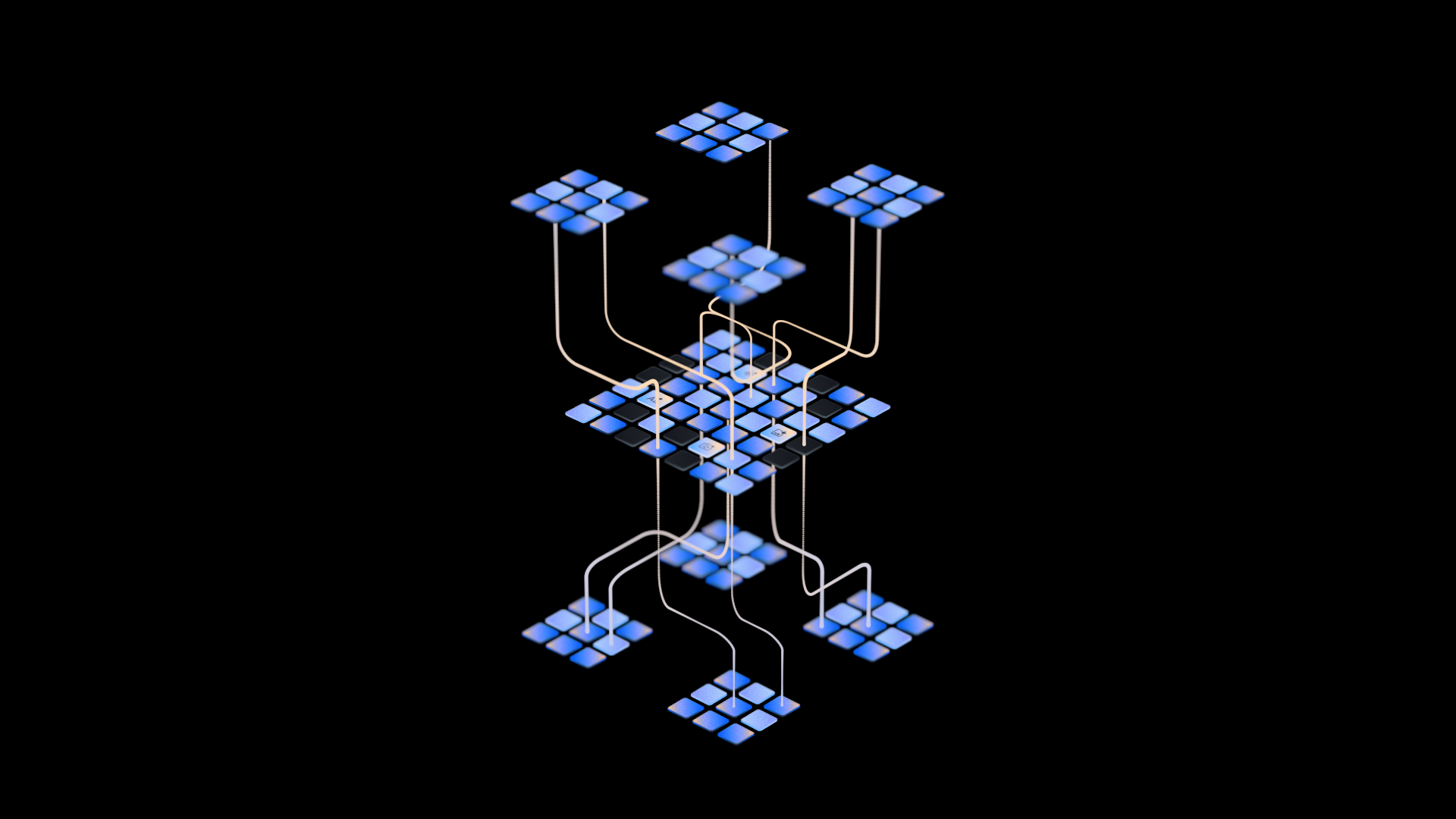

通过尖端解决方案推进人工智能创新

人工智能正在帮助几乎每个行业的组织提高生产力、吸引客户、实现运营效率并获得竞争优势。云超级计算的进步以及实现百亿亿次级处理的能力是人工智能创新新时代的主要催化剂。当今常见的人工智能用例包括个性化医疗保健和……

来源:

MIT Technology Review 2024/3/4

谷歌 DeepMind 的新生成模型从头开始制作类似超级马里奥的游戏

精灵通过观看数小时的视频来学习如何控制游戏。它也可以帮助训练下一代机器人。

来源:

MIT Technology Review 2024/2/29

对话式人工智能彻底改变了客户体验格局

在不断发展的客户体验领域,人工智能已成为引导企业实现无缝交互的灯塔。虽然人工智能早在最新一波病毒式聊天机器人出现之前就已经在改变企业,但生成式人工智能和大型语言模型的出现代表了企业与客户互动和管理内部工作流程方式的范式转变。“我们…

来源:

MIT Technology Review 2024/2/26

利用生成式 AI 转变文档理解和见解

在过去二十年的某个时刻,生产力应用程序使人类(和机器!)能够以数字化的速度创建信息,比任何人消费或理解信息的速度都要快。现代收件箱和文件夹中充满了信息:数字大海捞针,其中蕴藏着洞察之针,但往往未被发现。生成式人工智能是……

来源:

MIT Technology Review 2024/2/20

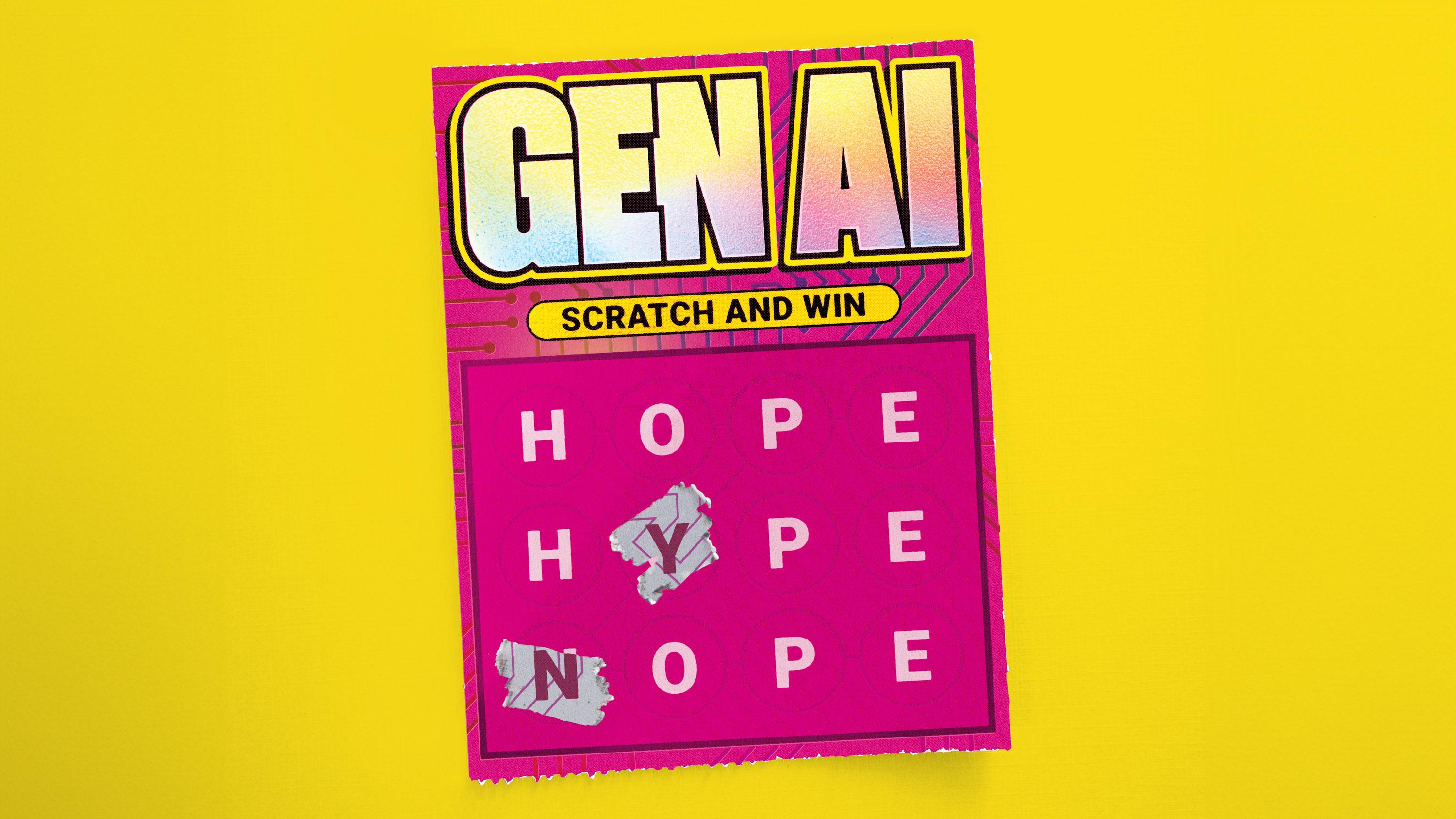

人工智能时代负责任的技术使用

去年,应用就绪的生成式人工智能工具的突然出现给我们带来了具有挑战性的社会和道德问题。关于这项技术如何深刻改变我们工作、学习和生活方式的愿景也加速了关于如何以及是否可以负责任地使用这些技术的对话和令人窒息的媒体头条新闻。负责任的技术使用,...

来源:

MIT Technology Review 2024/2/15

OpenAI 推出了一个令人惊叹的新型生成视频模型,名为 Sora

该公司正在与一小群安全测试人员共享 Sora,但我们其他人将不得不等待了解更多信息。

来源:

MIT Technology Review 2024/2/15

通过机器学习在正确的时间提供正确的产品

无论您最喜欢的调味品是亨氏番茄酱,还是您最喜欢的百吉饼涂抹酱是费城奶油奶酪,要确保所有客户都能在正确的地点、以正确的价格、在正确的时间获得他们喜欢的产品,就需要仔细的供应链组织和分配。随着电子商务的激增和转变......

来源:

MIT Technology Review 2024/2/14

阻止国家相关威胁行为者对人工智能的恶意使用

我们终止了与国家相关威胁行为者相关的账户。我们的研究结果表明,我们的模型仅为恶意网络安全任务提供有限的增量功能。

来源:

OpenAi Blog 2024/2/14

谷歌的双子座现在无所不在。您可以尝试以下方法。

Gmail、Docs 等功能现在将随 Gemini 一起推出。但欧洲人必须等待才能下载该应用程序。

来源:

MIT Technology Review 2024/2/11

八大全球性科技公司承诺遵循教科文组织《建议书》,致力构建更合乎伦理的人工智能

教科文组织今天表示,八大全球性科技公司与其签署了一项具有开创性的协议。在设计和使用人工智能(AI)系统时,这些公司将结合教科文组织《人工智能伦理问题建议书》中有关AI伦理的价值观和原则。

来源:

联合国新闻/人工智能 2024/2/5

A chatbot helped more people access mental-health services

The Limbic chatbot, which screens people seeking help for mental-health problems, led to a significant increase in referrals among minority communities in England.

来源:

MIT Technology Review 2024/2/4

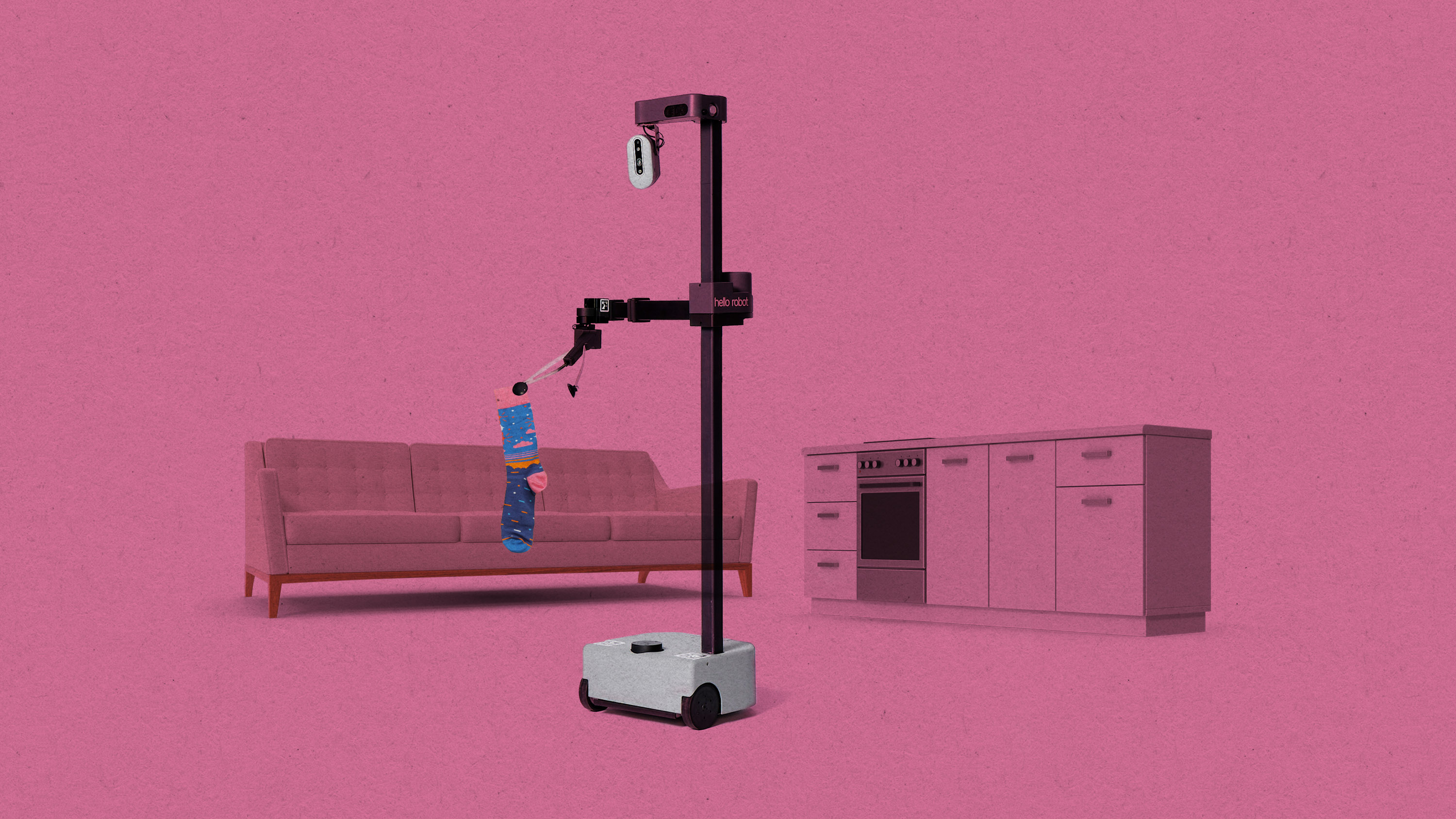

This robot can tidy a room without any help

A new system helps robots navigate homes they’ve never seen before with a little help from open-source AI models.

来源:

MIT Technology Review 2024/2/1

New embedding models and API updates

We are launching a new generation of embedding models, new GPT-4 Turbo and moderation models, new API usage management tools, and soon, lower pricing on GPT-3.5 Turbo.

来源:

OpenAi Blog 2024/1/25

Four things to know about China’s new AI rules in 2024

From drafting an AI law to zooming in on copyright and safety reviews, experts say these are Chinese AI regulators’ priorities in 2024

来源:

MIT Technology Review 2024/1/20

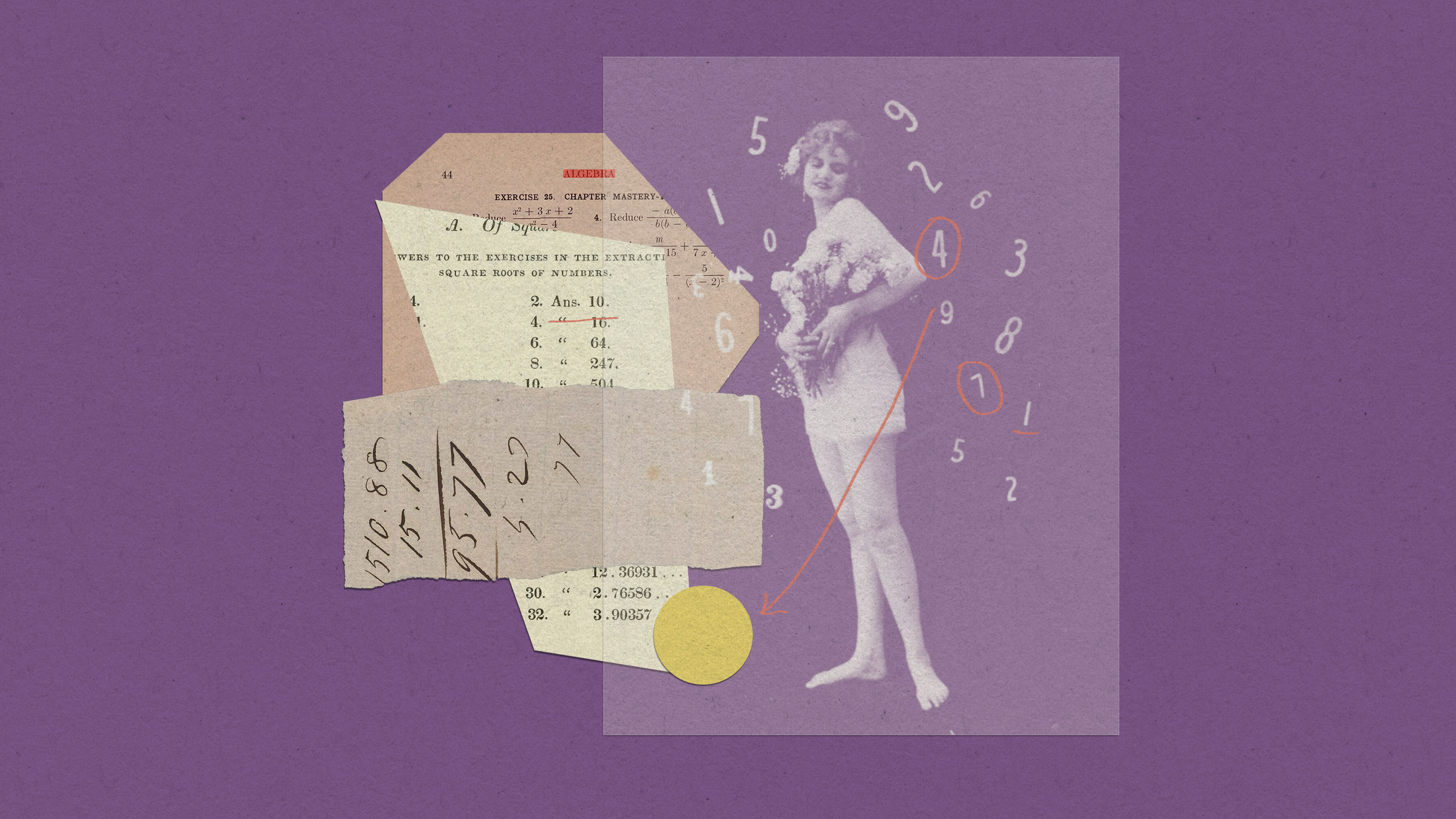

Why does AI being good at math matter?

AI systems that can solve complex math could allow us to build more powerful AI tools.

来源:

MIT Technology Review 2024/1/20

世卫组织为生成式人工智能的医疗应用发布伦理指南

世界卫生组织今天发布最新的人工智能伦理指南,旨在确保基于多模态大模型(LMMs)的生成式人工智能在卫生保健领域得到恰当的应用。

来源:

联合国新闻/人工智能 2024/1/18

Outperforming competitors as a data-driven organization

In 2006, British mathematician Clive Humby said, “data is the new oil.” While the phrase is almost a cliché, the advent of generative AI is breathing new life into this idea. A global study on the Future of Enterprise Data & AI by WNS Triange and Corinium Intelligence shows 76% of C-suite leaders and decision-makers are planning or…

来源:

MIT Technology Review 2024/1/17

Democratic inputs to AI grant program: lessons learned and implementation plans

We funded 10 teams from around the world to design ideas and tools to collectively govern AI. We summarize the innovations, outline our learnings, and call for researchers and engineers to join us as we continue this work.

来源:

OpenAi Blog 2024/1/16

How OpenAI is approaching 2024 worldwide elections

We’re working to prevent abuse, provide transparency on AI-generated content, and improve access to accurate voting information.

来源:

OpenAi Blog 2024/1/15

Introducing the GPT Store

We’re launching the GPT Store to help you find useful and popular custom versions of ChatGPT.

来源:

OpenAi Blog 2024/1/10

Introducing ChatGPT Team

We’re launching a new ChatGPT plan for teams of all sizes, which provides a secure, collaborative workspace to get the most out of ChatGPT at work.

来源:

OpenAi Blog 2024/1/10

Deploying high-performance, energy-efficient AI

Although AI is by no means a new technology there have been massive and rapid investments in it and large language models. However, the high-performance computing that powers these rapidly growing AI tools — and enables record automation and operational efficiency — also consumes a staggering amount of energy. With the proliferation of AI comes…

来源:

MIT Technology Review 2024/1/10

What to expect from the coming year in AI

There is immense pressure on AI companies to show that generative AI can make money and produce a "killer app."

来源:

MIT Technology Review 2024/1/9

Bringing breakthrough data intelligence to industries

As organizations recognize the transformational opportunity presented by generative AI, they must consider how to deploy that technology across the enterprise in the context of their unique industry challenges, priorities, data types, applications, ecosystem partners, and governance requirements. Financial institutions, for example, need to ensure that data and AI governance has the built-in intelligence to…

来源:

MIT Technology Review 2024/1/9

OpenAI and journalism

We support journalism, partner with news organizations, and believe The New York Times lawsuit is without merit.

来源:

OpenAi Blog 2024/1/8

What’s next for AI in 2024

Our writers look at the four hot trends to watch out for this year

来源:

MIT Technology Review 2024/1/6

How machine learning might unlock earthquake prediction

Researchers are applying artificial intelligence and other techniques in the quest to forecast quakes in time to help people find safety.

来源:

MIT Technology Review 2023/12/29

These six questions will dictate the future of generative AI

Generative AI took the world by storm in 2023. Its future—and ours—will be shaped by what we do next.

来源:

MIT Technology Review 2023/12/19

Navigating a shifting customer-engagement landscape with generative AI

One can’t step into the same river twice. This simple representation of change as the only constant was taught by the Greek philosopher Heraclitus more than 2000 years ago. Today, it rings truer than ever with the advent of generative AI. The emergence of generative AI is having a profound effect on today’s enterprises—business leaders…

来源:

MIT Technology Review 2023/12/18

Superalignment Fast Grants

We’re launching $10M in grants to support technical research towards the alignment and safety of superhuman AI systems, including weak-to-strong generalization, interpretability, scalable oversight, and more.

来源:

OpenAi Blog 2023/12/14

Google DeepMind used a large language model to solve an unsolvable math problem

They had to throw away most of what it produced but there was gold among the garbage.

来源:

MIT Technology Review 2023/12/14

This new system can teach a robot a simple household task within 20 minutes

The Dobb-E domestic robotics system was trained in real people’s homes and could help solve the field’s data problem.

来源:

MIT Technology Review 2023/12/14

Partnership with Axel Springer to deepen beneficial use of AI in journalism

Axel Springer is the first publishing house globally to partner with us on a deeper integration of journalism in AI technologies.

来源:

OpenAi Blog 2023/12/13

Five things you need to know about the EU’s new AI Act

The EU is poised to effectively become the world’s AI police, creating binding rules on transparency, ethics, and more.

来源:

MIT Technology Review 2023/12/11

These robots know when to ask for help

Large language models combined with confidence scores help them recognize uncertainty. That could be key to making robots safe and trustworthy.

来源:

MIT Technology Review 2023/12/10

Google CEO Sundar Pichai on Gemini and the coming age of AI

In an in-depth interview, Pichai predicts: “This will be one of the biggest things we all grapple with for the next decade.”

来源:

MIT Technology Review 2023/12/3

Google DeepMind’s new Gemini model looks amazing—but could signal peak AI hype

It outmatches GPT-4 in almost all ways—but only by a little. Was the buzz worth it?

来源:

MIT Technology Review 2023/12/3

How AI assistants are already changing the way code gets made

AI coding assistants are here to stay—but just how big a difference they make is still unclear.

来源:

MIT Technology Review 2023/12/3

AI’s carbon footprint is bigger than you think

Generating one image takes as much energy as fully charging your smartphone.

来源:

MIT Technology Review 2023/12/3

Make no mistake—AI is owned by Big Tech

If we’re not careful, Microsoft, Amazon, and other large companies will leverage their position to set the policy agenda for AI, as they have in many other sectors.

来源:

MIT Technology Review 2023/12/3

人权高专:必须确保人权植根于人工智能的整个生命周期与风险管理之中

联合国人权事务高级专员蒂尔克今天在“生成式人工智能与人权”峰会上强调,必须基于人权来加强对人工智能风险的治理,同时推进负责任的工商业行为,并确保对造成人权伤害的企业追究责任。

来源:

联合国新闻/人工智能 2023/11/30

【音频专题】“联合国是唯一值得信赖的人工智能治理全球协调机构”——专访中科院专家曾毅

为了让人工智能最大程度地造福于全人类,同时遏制它可能带来的风险,联合国秘书长古特雷斯呼吁就人工智能的治理进行多利益攸关方共同参与、多学科共治的全球性对话,并为此在今年10月底组建了一个高级别咨询机构。作为该机构39名专家当中的一员,中国科学院自动化研究所研究员、类脑智能研究中心副主任曾毅日前接受了《联合国新闻》邹合义的专访。他在畅谈当前人工智能的发展与挑战的同时,也对其全球治理体系和咨询机构的工作进行了展望。

来源:

联合国新闻/人工智能 2023/11/29

Sam Altman returns as CEO, OpenAI has a new initial board

Mira Murati as CTO, Greg Brockman returns as President. Read messages from CEO Sam Altman and board chair Bret Taylor.

来源:

OpenAi Blog 2023/11/29

OpenAI announces leadership transition

来源:

OpenAi Blog 2023/11/17

OpenAI Data Partnerships

Working together to create open-source and private datasets for AI training.

来源:

OpenAi Blog 2023/11/9

New models and developer products announced at DevDay

GPT-4 Turbo with 128K context and lower prices, the new Assistants API, GPT-4 Turbo with Vision, DALL·E 3 API, and more.

来源:

OpenAi Blog 2023/11/6

Introducing GPTs

You can now create custom versions of ChatGPT that combine instructions, extra knowledge, and any combination of skills.

来源:

OpenAi Blog 2023/11/6

古特雷斯:缺乏全球监管,人工智能带来的风险将大于收益

联合国秘书长古特雷斯今天在英国参加全球人工智能安全峰会时强调,人工智能治理的原则应当基于《联合国宪章》和《世界人权宣言》,即推进和平与可持续发展,同时保护和促进人权。

来源:

联合国新闻/人工智能 2023/11/2

秘书长组建高级别咨询机构,全球39名专家共商人工智能治理

联合国秘书长古特雷斯今天在纽约总部宣布,正式组建一个新的人工智能咨询机构,以探讨这项技术带来的风险和机遇,并为国际社会加强治理提供支持。

来源:

联合国新闻/人工智能 2023/10/26

Frontier risk and preparedness

To support the safety of highly-capable AI systems, we are developing our approach to catastrophic risk preparedness, including building a Preparedness team and launching a challenge.

来源:

OpenAi Blog 2023/10/26

Frontier Model Forum updates

Together with Anthropic, Google, and Microsoft, we’re announcing the new Executive Director of the Frontier Model Forum and a new $10 million AI Safety Fund.

来源:

OpenAi Blog 2023/10/25

世卫组织:人工智能将为健康带来巨大希望,但监管是关键

世界卫生组织今天发布了一份新的出版物,呼吁加强对医疗行业使用人工智能的监管,防止人工智能遭到滥用。

来源:

联合国新闻/人工智能 2023/10/19

DALL·E 3 is now available in ChatGPT Plus and Enterprise

We developed a safety mitigation stack to ready DALL·E 3 for wider release and are sharing updates on our provenance research.

来源:

OpenAi Blog 2023/10/19

人权专家:隐私保护是人工智能处理个人数据的关键

联合国隐私权问题特别报告员努格蕾蕾斯(Ana Brian Nougrères)日前在向联合国大会提交的一份报告中指出,透明度不仅有助于建立人工智能的可靠性以及人们对这项技术的信任,还有助于促进和保护隐私权。

来源:

联合国新闻/人工智能 2023/10/16

ChatGPT can now see, hear, and speak

We are beginning to roll out new voice and image capabilities in ChatGPT. They offer a new, more intuitive type of interface by allowing you to have a voice conversation or show ChatGPT what you’re talking about.

来源:

OpenAi Blog 2023/9/25

OpenAI Red Teaming Network

We’re announcing an open call for the OpenAI Red Teaming Network and invite domain experts interested in improving the safety of OpenAI’s models to join our efforts.

来源:

OpenAi Blog 2023/9/19

Introducing OpenAI Dublin

We’re growing our presence in Europe with an office in Dublin, Ireland.

来源:

OpenAi Blog 2023/9/13

教科文组织发布首份全球指南,力促对生成式AI在教育中的运用实施管制

随着新学期的开始,联合国教科文组织今天发布全球首份关于在教育和研究领域使用生成式人工智能(AI)的指南,并呼吁各国政府尽快就此问题实施适当的管制和教师培训,确保这项技术在教育中的运用遵循以人为中心的方法。

来源:

联合国新闻/人工智能 2023/9/7

Join us for OpenAI’s first developer conference on November 6 in San Francisco

Developer registration for in-person attendance will open in the coming weeks and developers everywhere will be able to livestream the keynote.

来源:

OpenAi Blog 2023/9/6

Teaching with AI

We’re releasing a guide for teachers using ChatGPT in their classroom—including suggested prompts, an explanation of how ChatGPT works and its limitations, the efficacy of AI detectors, and bias.

来源:

OpenAi Blog 2023/8/31

Introducing ChatGPT Enterprise

Get enterprise-grade security & privacy and the most powerful version of ChatGPT yet.

来源:

OpenAi Blog 2023/8/28

OpenAI partners with Scale to provide support for enterprises fine-tuning models

OpenAI’s customers can leverage Scale’s AI expertise to customize our most advanced models.

来源:

OpenAi Blog 2023/8/24

GPT-3.5 Turbo fine-tuning and API updates

Developers can now bring their own data to customize GPT-3.5 Turbo for their use cases.

来源:

OpenAi Blog 2023/8/22

Using GPT-4 for content moderation

We use GPT-4 for content policy development and content moderation decisions, enabling more consistent labeling, a faster feedback loop for policy refinement, and less involvement from human moderators.

来源:

OpenAi Blog 2023/8/15

秘书长:联合国是为人工智能制定全球标准与治理手段的“理想场所”

联合国秘书长古特雷斯今天在安理会就人工智能举行的首场公开辩论上强调,各方必须共同为发展人工智能努力,以弥合社会、数字与经济鸿沟,而不是让人们之间的距离进一步拉大。他同时欢迎一些会员国提出建立新的联合国机构来治理这项技术,并称“我们需要争分夺秒来让人工智能造福人类”。

来源:

联合国新闻/人工智能 2023/7/18

机器人在联合国峰会上表示,他们不会抢走人类工作,也不会反抗人类

在“人工智能造福人类”全球峰会于日内瓦举行期间,9个人工智能人形机器人今天告诉记者,它们会帮助解决全球问题,不会抢走人类的工作岗位,也不会反抗人类。

来源:

联合国新闻/人工智能 2023/7/7

AI吧&竹记交流群